Clangd With Unity Builds

Sat 14 January 2023

Michael Labbe

#code

So, you’re programming in C/C++ and your editor is live-checking your code, throwing up errors as you type them. Depending on your editor you’re either getting red underlines or some sort of marker in the margins that compels you to change it. But how does this source code processing engine work, and what can you do when things go off the rails when you attempt something like a unity build?

You may have configured your editor to use a language server called clangd, or it may have been configured for you.

Clangd also offers other benefits, such as go-to-definition and code completion. It runs as a subprocess of your editor, and that means it’s doing its indexing work off the main thread as you would hope.

Clangd can be configured to work with VSCode and Emacs amongst others.

Problem 1: Clangd doesn’t understand how you build

You’re coding along and need to include a bunch of files from a library, so you add a new include path to your build with -Isome_lib/. Unfortunately, clangd doesn’t nkow that you build with this, so a bunch of ugly, incorrect compile errors pop up in your editor.

If you employ your Google-fu, you land on a page that says that Clangd lets you specify your compile flags in a yaml file called .clangd. But, wait! There is a better way. You really want to automate this, or you’ll have to maintain two sets of all your compile flags, which sounds like one of the least enjoyable things you could possibly fritter your time away on.

The good news is that Clangd, alternatively, reads in a compile database, which is just a JSON-structured list of the commands needed to compile each file in a given way. There are plenty of tools that can generate a compile database automatically. For example, if you can output Ninja Build files, you are one step away:

ninja -t compdb > compile_database.jsonClangd recursively searches up the tree to find this file, and uses it to try and compile your files. If a source file exists in your codebase but isn’t in the compile database, it will infer the flags needed to compile it from other commands in adjacent files in this directory. This is most likely what you want, and it’s a great starting point.

You should run this command as a post-build step, or after updating your source repo in order to automatically update your compile database so you never have to worry about keeping your compile flags in sync again.

Problem 2: Clangd adds annoying warnings you don’t want

Sometimes your on-the-fly error checking is too quick on the draw. It tells you about warnings you’d rather only see when you’re compiling. Warning about unused functions is one such example — you declare a function as static and intend to call it. But, before you have a chance to call it, it throws a distracting error. Let me finish typing, Clangd!

One option is to use a compile database post processor that ingests the Clang database that is spat out from Ninja, letting you make the tweaks you need. Now you have automation and customization.

I wrote a compile database post processor that you pipe your output through. Now your command looks like:

ninja -t compdb | \ cleanup-compdb > compile_commands.jsonLet’s say you want to disable the warning for unused functions. cleanup-compdb can append a flag for that to each compile command:

ninja -t compdb | \ cleanup-compdb \ --append-arguments="-Wno-unused-function" \ > compile_commands.jsonProblem 3: Clangd doesn’t understand unity builds

Let’s start by defining the problem.

You have a c “root” file that #includes one or more “sub” files which produce a single translation unit. Clangd now deftly handles the root file and locates the includes. However, Clangd has no concept of c source that is not a stand alone translation unit, so the sub files generate more benign errors than you can count.

Clangd doesn’t have support for unity builds. However, we can instruct it to resolve the necessary symbols as if the sub files were each their own translation unit for error checking purposes.

To illustrate this technique let’s define the root translation unit as root.c, and the sub units as sub0.c, sub1.c, and so on.

An additional file root.unity.h will be created that has all of the common symbols for the translation unit.

The steps to factoring your Unity build for Clangd

-

Create a

root.unity.hfile that starts with#pragma onceand includes all common symbols in the translation unit, including system headers and forward declarations. -

Create

root.cwhich includesroot.unity.hbefore anything else. Doing anything else in this file is entirely optional. -

Create

sub0.cand start it off with#pragma onceand then includeroot.unity.h. -

Include

sub0.cat the bottom ofroot.unity.h. -

Repeat steps 3 and 4 for any other sub-files in the unity build.

This has the following results:

- For real compilations,

root.ccompiles and includessub0.cand ignores the second include ofroot.unity.hthat comes fromsub0.cbecause that file starts with#pragma once. - For Clangd processing of

root.c, the previously described compilation works similarly and everything just works. - For Clangd processing of

sub0.cas the faux-main translation unit, it includesroot.unity.hand all symbols resolve, thus fixing the litany of errors.

One hiccup remains. Clangd now emits this warning:

warning: #pragma once in main fileThis is but a hiccup for us if we are using a compilation database postprocessor as described in problem 2. Simply append -Wno-pragma-once-outside-header to the list of warnings we want to ignore.

Conclusion

Jumping to symbols in an IDE and getting accurate compilation previews is a problem that has been imperfectly solved for decades, even with commercial plugins in Visual Studio. This blog post proposes a few tweaks to the language server Clangd which, in exchange for paying an up-front effort cost, automates the maintenance of a compilation database that can be tweaked to suit your specific needs.

In taking the steps described in this blog post, you will gain the knowhow to adapt the existing tools to a wide range of C/C++ codebases.

I propose a new technique for unity builds in Clangd that operates without the inclusion of any additional compiler flags. If you are able to factor your unity builds to match this format, it is worth an attempt.

Low Overhead Structured Logging in C

Mon 27 December 2021

Michael Labbe

#code

Sure, we call it printf debugging, but as projects get more complex, we aren’t literally calling printf. As our software is adopted we progress to troubleshooting user’s logs. If we’re granted enough success we are faced with statistically quantifying occurrences from many users logs via telemetry.

This is best served by structured logging. There are many formal definitions of structured logs, but for our purposes a simple example will do. Say you have this log line:

Player disconnected. Player count: 12A structured log breaks fields out to make further processing and filtering trivial. This example uses JSON but it can be anything consistent that a machine can process:

{ "file": "src/network.c", "line": 1000, "timestamp": "1640625081", "msg": "Player disconnected.", "player_count": 12}The structured version looks like a message that can be processed and graphed by a telemetry system, or easily searched and filtered in a logging tool.

Coming from backend development where structured logging is expected and common, I wanted this function in my next game client. Because client performance is finite, I sought a way to assemble these messages using the preprocessor.

In practice, a fully structured message can be logged in one single function call without unnecessary heap allocation, unnecessary format specifier processing or runtime string concatenation. Much of the overhead in traditional loggers can be entirely avoided by building the logic using the C preprocessor.

The rest of this post illustrates how you might write your own structured logger using the C preprocessor to avoid runtime overhead. Each step takes the concept further than the one before it, building on the previous implementation and concepts.

Rather than supply a one-size-fits-all library, I suggest you roll your own logger. Stop when the functionality is adequate for your situation. Change the functionality where it does not fit your needs.

Step Zero: printf()

The most basic logger just calls printf(). For modest projects, you’re done! Just remember to clean up your logs before you ship.

Step One: Wrap Printf()

It is useful to wrap printf() in a variadic macro, which can be toggled off and on to reduce spam and gain performance on a per-compilation unit basis.

As of C99, we have variadic macros. The following compiles on Visual C++ 2019, clang and gcc with --std=gnu99.

#define LOGF(fmt, ...) fprintf(stdout, fmt, __VA_ARGS__);#define LOG(comment) puts(comment);Why do we need a special case LOG() that is separate from LOGF()? Unfortunately, having zero variadic arguments is not C99 compliant and, in practice, generates warnings. For conciseness, the rest of this post only provides examples for LOGF(). However, a full implementation would handle both the zero argument and the non-zero argument cases wholly.

Step Two: Route Logs

Having logs print to stdout, also append to disk or submit to a telemetry ingestor are all useful scenarios. Changing where logs are emitted can be reduced to a series of preprocessor config flags.

// config#define LOG_USE_STDIO 1#define LOG_USE_FILE 1// implementation#if LOG_USE_STDIO# define LOG__STDIO_FPRINTF(stream, fmt, ...) \ fprintf(stream, fmt, __VA_ARGS__);#else# define LOG__STDIO_FPRINTF(stream, fmt, ...)#endif#if LOG_USE_FILE# define LOG__FILE(stream, fmt, ...) \ fprintf(stream, fmt, __VA_ARGS__); \ fflush(stream);#else# define LOG__FILE(stream, fmt, ...)#endifNote the call to fflush() ensures the stream is correctly written to disk in the result of an unscheduled process termination. This can be essential for troubleshooting the source of the crash!

Now we have two macros LOG__STDIO_FPRINTF() and LOG__FILE() which either log to the respective targets or do nothing, depending on configuration. The double underscore indicates the macro is internal, and not part of the logging API. Replace the previous LOGF() definition with the following:

#define LOGF(fmt, ...) \{ \ LOG__STDIO_FPRINTF(stdout, fmt, __VA_ARGS__); \ LOG__FILE(handle, fmt, __VA_ARGS__); \}Opening handle prior to calling LOGF() is an exercise left up to the implementer.

Step Three: Log Levels

Limiting logging to higher severity messages is useful for reducing spam. Traditional logging packages call into a variadic function, expending resources before determining the log level does not permit the log line to be written.

We can do better — our logging system simply generates no code at all if the log level is too low.

#define LOG_LEVEL_TRACE 0#define LOG_LEVEL_WARN 1#define LOG_LEVEL_ERROR 2#if !defined(LOG_LEVEL)# define LOG_LEVEL LOG_LEVEL_TRACE#endifThe test for LOG_LEVEL being pre-defined allows a user to control the log level on a per-compilation unit basis before including the logging header file. This is extremely useful for getting detailed logs for only the section of code you are currently working on.

With this in mind, delete the LOGF definition, and replace it with the following:

#define LOG__DECL_LOGLEVELF(T, fmt, ...) \{ \ LOG__STDIO_FPRINTF(stdout, T ": " fmt, __VA_ARGS__); \ LOG__FILE(handle, T ": " fmt, __VA_ARGS__); \}#if LOG_LEVEL == LOG_LEVEL_TRACE# define LOG_TRACEF(fmt, ...) LOG__DECL_LOGLEVELF("TRC", fmt, __VA_ARGS__);#else# define LOG_TRACEF(fmt, ...)#endif#if LOG_LEVEL <= LOG_LEVEL_WARN# define LOG_WARNF(fmt, ...) LOG__DECL_LOGLEVELF("WRN", fmt, __VA_ARGS__);#else# define LOG_WARNF(fmt, ...)#endif#if LOG_LEVEL <= LOG_LEVEL_ERROR# define LOG_ERRORF(fmt, ...) LOG__DECL_LOGLEVELF("ERR", fmt, __VA_ARGS__);#else# define LOG_ERRORF(fmt, ...)#endifAdding or changing log levels is an exercise left up to the user. Do what works for you.

Note the obscure T ": " fmt syntax. Rather than pay the overhead of invoking snprintf(), or allocating heap memory to prefix the log line with “TRC”, “WRN” or “ERR”, we use preprocessor concatenation to generate a single format string at compile time.

At this point, you should be able to write the following code:

#define LOG_LEVEL 0#include "log.h"LOG_TRACEF("Hello, %d worlds!", 42);and see the log line

TRC: Hello, 42 worlds!on stdout, and in your log file.

Step Four: Structuring the Logs

C gives us macros for the file and line each log line was generated on. Before we go on to support specific structured fields, we can already generate a structured log with four fields:

- The log level

- The file the log message originated from

- The line of code the log message originated from

- The formatted message itself.

At the beginning of this post a JSON-structured log was used as an example of a structured log. However, there is a runtime cost to escaping logged strings in order to produce valid JSON in all cases that we would like to avoid. Consider:

LOG_TRACE("I said \"don't do it\"");Unless processed, this would produce the ill-formatted JSON string

"I said "don't do it""Structured Log Message Specification

Rather than generate structured JSON, another logging format is used, which can be postprocessed into JSON with a small script if necessary.

- A logging message starts with the 3-byte logging level and a newline. Eg: “TRC” or “WRN”, followed by a newline.

- Each subsequent field’s key is a tab following by alphanumeric characters, a colon and a single space character.

/^\t[a-zA-Z0-9]+:\x20/

- Immediately following, the structured field’s value is every byte until the three byte end-of-line sequence

0x200x1A0x0Ais encountered./.+\x20\x1A\n/

- Further fields follow until the final field, which is terminated with the two-byte sequence

0x1A0x1A./\x1A\x1A$/.

- Note that the final field does not also contain the three byte end-of-line sequence.

This results in a structured log message which is machine-parsable, but is also human readable on popular Linux UTF-8 terminals, as well as DOS codepage 437 (the DOS Latin US codepage). Unless your log messages contain the highly obscure byte sequences described above (unlikely!), we do not need to do any runtime escaping, even for newlines.

Structured Log Implementation

A structured log is one long string that is concatenated in the preprocessor. Here we provide a mechanism to let the user choose between flat and structured with a preprocessor flag.

// config#define LOG_USE_STRUCTURED 1// implementation#define LOG__EOL "\x20\x1A\n"#define LOG__EOM "\x1A\x1A\n"#define LOG__FLAT(file, line, level, msg) \ level " " file ":" line " |" msg "\n"#define LOG__STRUCTURED(file, line, level, msg) \ level LOG__EOL \ "\tfile: " file LOG__EOL \ "\tline: " line LOG__EOL \ "\tmsg: " msg LOG__EOM "\n"#if LOG_USE_STRUCTURED# define LOG__LINE(file, line, level, msg) \ LOG__STRUCTURED(file, line, level, msg)#else# define LOG__LINE(file, line, level, msg) \ LOG__FLAT(file, line, level, msg)#endifNow a call to LOG__LINE() generates either a flat or structured string which can be passed into a printf-style format specifier parameter. We redefine LOG__DECL_LOGLEVELF() as follows:

#define LOG__XSTR(x) #x#define LOG__STR(x) LOG__XSTR(x)#define LOG__DECL_LOGLEVELF(T, fmt, ...) \{ \ LOG__STDIO_FPRINTF(stdout, \ LOG__LINE(__FILE__, LOG__STR(__LINE__), #T, fmt), \ __VA_ARGS__); \ \ LOG__FILE(handle, \ LOG__LINE(__FILE__, LOG__STR(__LINE__), #T, fmt), \ __VA_ARGS__); \}Note the use of LOG__STR(__LINE__) is necessary to stringify __LINE__, which is an integral constant. Because we concatenate it at compile time, it must be stringified.

Step Five: Structured Logging With Custom Fields

Structured logging of custom fields pushes the boundaries of what can be done by C preprocessing. Internally, we run a precompile step that:

- Defines C-style structs in a custom language

- Generates a C header file with those structs

- Generates a C macro that logs each field of the struct in a structured manner

- Defines visualizations for it in our telemetry visualization software

Building such a script and hooking it up as a precompile step is out of scope for this post. However, it is possible to perform this work incurring a single snprintf() at runtime.

Putting It All Together

Despite all of these changes and improvements, a logging call to print to stdout will still result in a single function call. To test that, we view the preprocessed output of a LOG_TRACEF() call generated with clang -E:

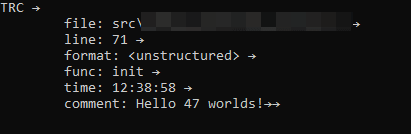

// logging call in sourceLOG_TRACEF("Hello %d", 42);// output of clang's c preprocessor (newlines added){ fprintf(stdout, "\"TRC\"" "\x20\x1A\n" "\tfile: " "main.c" "\x20\x1A\n" "\tline: " "10" "\x20\x1A\n" "\tmsg: " "Hello %d\n" "\x1A\x1A\n" "\n", 42);; ; };;Success — we can see only one call being made and it’s to the library function fprintf to log the message. On Windows console it looks something like this:

The little arrows are the end-of-line and end-of-message markers, essential for further machine processing.

Processing Structured Logs

As explained, further processing of logs into JSON may be a necessary step before telemetry ingestion. This Python script accepts structured logs streamed from stdin. It outputs properly formatted JSON.

Sample Code

Here is sample code assembled from the snippets in this post.

License

All of the sample code in this blog post and in the linked gist are Copyright © 2021 Frogtoss Games, Inc., and are free to use and modify under the terms of the MIT license.

The Most Impactful Developer Changes I Made in the 2010s

Wed 01 January 2020

Michael Labbe

I am using the new decade to reflect on the most important choices I made as a developer in the 2010s. For the sake of context, I have been hobby programming since 1986, and have had a career programming doing 1999. Games have been the central context for my problem solving, and for the 2000s I largely worked on C++ in core engines and networking on AAA titles, with a side of Python tooling. My work targeted Xbox 360, Playstation 3, Windows and Linux.

I am a developer who does not change languages, roles or rebuild codebases lightly. The benefit of the new approach has to offset the abandonment of the investment in the old which is a tough thing to justify.

That being the acknowledged high bar, there have been a number of improvements I have permitted over the past decade. Many changes optimize for efficiency, but let’s be honest — some are about comfort but do not substantially move the productivity needle in one direction or another. I believe these are still worthwhile for lifelong coders to pursue, within measure.

Career Focus on Core Tech and Services

If it wasn’t obvious to me by the end of the 1990s, it was certainly obvious by the end of the 2000s: the things that I liked most about making games was happening in middleware and on engine teams, and not on actual game teams very consistently.

Services (as in hosted software for development teams) are another area where I have been able to make an impact, and I really saw the strength they can provide and what it takes to keep them running at scale when I consulted with Final Strike Games.

As we enter 2020, traditional engine programmers who also deeply understand how to build and deploy highly available web services at scale are still extremely rare. Many of the interesting problems I intend to solve over the next few years are in the intersection of these two specializations. I see this as a space full of unsolved problems that have a lot of value to any game development team.

Smaller Codebases vs. Monolithic Codebase

From ‘04-‘15 I coded, ported and maintained a game engine which I largely wrote myself. It had everything in a single codebase (and to a large degree, the same address space at runtime!) This included a UI toolkit, an in-engine level editor, an original http client (predating Curl!) a texture compressor and many other goodies. None of these could be separated or built without the whole.

Whenever it was time to try some new experimental direction, I would carve into this monolith, hack in the new experiment, slowly integrating it into the whole. The result was predictable: a hard to slim down, hard to port codebase that also took awhile to compile and had a number of external dependencies which often did very little to help the shipping featureset.

Mid-decade, in light of my shift to tools and services and away from game engine programming, I committed to moving all development to multiple, smaller codebases. The rise of single header C libraries (and indeed, source/header pairs) helped persuade that a non-monolithic codebase was the best investment a coder on a small team could make in many cases.

The increased nimbleness and changeability that comes from having less intertwined code has made it far easier to ship real features to customers.

Project Generators Are Actually Very Handy

Many IDEs like Visual Studio and Xcode have project generators. The projects they generate are almost always the wrong thing and you have to go about deleting a bunch of cruft; worse than an empty project. However, it turns out, if you create your own project templates they can be huge productivity boosts. You are freed from the need to burden yourself from specifying how your project and all of its dependencies build every single time you start a new codebase. This strikes out a massive disincentive to build smaller projects.

I ratified the Native Project Standards, a set of thoughtful, partially arbitrary places to put all of the files in a project and then wrote a Project Generator for Native Project Standards which effectively enables me to build my project and all of its dependencies on a number of OSes and IDEs before I even write the first line of bespoke code.

You may disagree with the minutiae of NPS and the project generator may not do what you need it to. They haven’t been adopted by anyone but myself as far as I know. That said, I cannot overstate how impactful it has been to have every codebase I work on have exactly the same build commands with the exact same directory structures. Here is a short list of really meaningful benefits this has yielded:

- All new projects automatically hook up to a build server which builds, sanitizes, tests and deploys installers for each target platform.

- Customize your text editor to automatically know where things are once, and have it always work.

- Built-in support for sanitizers means sanitization is happening from the start.

- Every project and its supported dependencies build in each architecture and OS in release or debug from the word go.

- I haven’t had to write a Makefile or tweak a Visual Studio Solution in five years, and I’ve shipped four projects using this scheme.

I also learned that it is possible to go overboard with project generation, wherein you end up needing to fix a bug in many different codebases. Don’t go wide with your project generator until you’ve pounded out all of the major issues, and try not to generate code where referencing library code improves your agility.

C99 Is Now Primary

While I’ll still pull C++ out when necessary, I default to C99 as my implementation language. When Visual Studio added quasi-C99 support in 2013, I began seriously considering this option. It compiles quickly, you can usually tell what is going to be executed next by just reading source and, in general, languages that are simpler to parse are easier to statically analyze.

Writing a library in C produces an evergreen product that can be called from almost every language. The C ABI is the lingua franca of programming languages. This makes maintaining FFIs for popular languages like Rust and Go that much simpler.

The two main things C99 misses on that cause problems for me:

- The need to write a

realloc()scheme for each new type. - The lack of a Go-like

deferkeyword and no RAII makes cleaning up a multiple-return function a perilous endeavour.

I live with these sharp edges and use tooling to detect problems early.

I’ve written a few small programs in Rust but it is still over the horizon for me. (See my earlier comment about not making changes lightly).

Go Replaces Python

While I still use Python for the glue-it-together tasks I used Perl for in the 1990s, Go is now the language I use for services oriented development. A strongly typed language with explicit error returns goes a long way to ensuring runtime integrity of data.

A standalone, multi-threaded executable that cross compiles to every PC operating system with virtually no effort is nothing to sneeze at. It is incredibly refreshing after trying to portably distribute Python programs to non-Python programmers. This amazing feature is why Go is the language I intend to use to solve highly networked problems that need to execute in the cloud or, alternatively, on the game developer’s workstation.

Many people consider Go a quickly executing language. I have a more balanced view of where it sits on the performance spectrum. A tri-colour mark and sweep garbage collector implies volatility about collectable objects. But, I digress.

Develop From Anywhere Through Tmux

Tmux is incredibly powerful and all of the time I have invested in learning and configuring it has been given back to me in productivity and convenience. It’s not the first of its type, but it’s the first that clicked into gear for me.

If you haven’t used it, Tmux sits between your terminal (Xterm, Gnome-Terminal, etc.) and the programs you’re running in the terminal. You can split the screen, run multiple windows (tabs, basically), nest Tmux sessions, or even display the same window across multiple sessions (for remote, textual screen sharing).

All of this is useful, but the killer feature is the ability to detach and reattach a session across devices. Using this and a small Linux vm in the cloud, I am able to work anywhere in the world, provided I use console-oriented programs. (Confession: I am a 25+ year Emacs guy so this is an easy fit for me).

There are times where I’d be working on my triple monitor home workstation, and then have to go out and wait for an appointment. I’d grab my iPad, and using Blink SSH, I’d reattach the session. All of my open code files, log tails and build commands would be right there, albeit in a smaller rectangle.

Tmux won’t help you as much if you are working with graphics or if you are only developing on Visual Studio but if the type of programming you are doing works in a console window, it is extremely handy to be able to detach and reattach at will, across any device.

Visual Replay Debugging

Almost every engine has debug line drawing which is indispensible for spatial or geometric problems. However, when faced with spatial or geometric edge cases, it is useful to glean details that emerge from sub-frame stepping through an algorithm and to be able to rewind and replay the debug visualization.

None of this is particularly possible in engines I have used, so when faced with some complex tesselation work I built my own line drawing library which streamed the lines out to a file on the disk. From there, a separate tool to visualized and let you scrub through the debug lines in either direction.

I went from poring over vertex values in a debugger for hours to immediately seeing collinear points. Since then i’ve used it to build an efficient tesselator, to implement edge case-free clipping routines and to even visualize Doom subsector creation.

Being able to replay each step in an algorithm and see the results has reduced development team and possibly even brought some of these solutions into reach for me. Visual replay debugging, where the replay happens outside of the address space program performing the work, has been a clear win for me.